Bad Chart Thursday: NHS Weekend Death Trap of Doom and Death

Terrifying news from the UK: You are more likely to die if admitted to the hospital on the weekend than on a weekday. This can only mean one thing–patients admitted on weekends are being locked in and forced to battle to the death.

Kidding, kidding. Leaping to a single conclusion like that without evidence or consideration of multiple factors would be at best irresponsible and at worst dangerous.

Enter stage right: British Health Secretary Jeremy Hunt and the British Conservative Party, who have decided that the higher mortality rate among weekend admissions is due to reduced services on the weekends, which can only be solved by creating a “seven-day NHS.”

The problem is that this conclusion seems to have as much evidence to support it as my Battle Royale idea above.

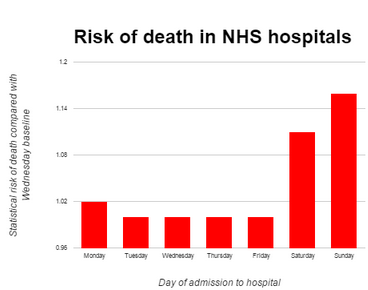

Let’s look at this issue through the lens of today’s bad chart, from the Conservative Home article by Paul Abbott entitled “The graph that shows why Hunt is right about a Seven Day NHS”:

Y-axis label: Statistical risk of death compared with Wednesday baseline

X-axis label: Day of admission to hospital

Right away, before we even get into the flaws in the chart itself, we get a perfect illustration of the leap in logic that is at the heart of the Conservative argument. The graph does not show “why Hunt is right about a Seven Day NHS.” It doesn’t show he’s wrong, either. The graph does not give us any information one way or the other about a seven-day NHS.

Abbott is assuming correlation equals causation. The flawed logic goes like this: The chart shows an increased rate of death on weekends. Services are reduced on weekends. Therefore, reduced services are causing the increase.

But you can make any number of ridiculous claims using that logic alone, without any supporting evidence to show a causal link. For example: The chart shows an increased rate of death on weekends. Television programming is different on the weekends than on weekdays. Therefore, being stuck in the hospital with only crappy weekend programming to entertain/distract people is sapping their will to live.

You don’t even need to jump to the lethality of Murder She Wrote reruns to come up with any number of possible reasons for the increase in weekend hospital deaths. The first thing that popped into my mind was that more deaths would be expected among those admitted on weekends because people going in on the weekend are more likely to have an urgent or emergent problem. In the US, it’s more expensive to seek care over the weekend for most people, so seeking care over the weekend rather than waiting to make an appointment during the week often signals a problem that can’t wait to be addressed. Of course emergencies and urgent symptoms are going to be more likely to indicate a life-threatening problem.

The expense of weekend healthcare might not be an issue for patients in the UK, so maybe this conclusion is not accurate (although I can still see not wasting the weekend by going into the hospital for a non-urgent problem for those who work regular business hours).

Another possible explanation is that hospitals are less likely to admit patients who come in on the weekends unless the health problem is urgent, especially if services on the weekend are reduced–not BECAUSE of reduced services, mind you, but because the hospital is set up to provide routine care during the week, so that would be postponed, increasing the proportion of people with non-routine, higher risk problems among weekend admissions.

On a similar note, more weekday admissions are likely to be for routine, scheduled, and/or elective care, which would throw off the risk ratio when comparing weekends to weekdays. Some, all, or even none of these could directly affect the weekend admission death rate.

The point is that there are other possible reasons that alone or (more likely) together might explain the increase in deaths. Without evidence supporting a causal link, we can’t draw conclusions about the cause of the increase, much less attempt to make policy changes based on these conclusions, especially policy changes that are potentially expensive and have life-or-death consequences.

So Abbott’s graph does not show the conclusion he touts in the title. Even the increases in deaths on Saturday and Sunday are exaggerated by starting the y-axis at 0.96 instead of 0. Here’s the same chart with a corrected y-axis:

EDIT 11/13/2015, 10am EST: Starting at 0 does not actually make sense for relative risk. A column chart is actually a misleading presentation for relative risk in the first place, so I’ve cut my own misleading corrected chart. Below is a more appropriate chart of the data, which is the source of Abbott’s numbers (see below). Thanks to Jamie Bernstein for pointing this out.

There is an increase, but is it significant enough to warrant the reaction from Hunt, Abbott, and others pushing for a seven-day NHS?

To find out, we have to go back to the data themselves. Abbott claims in his article that the graph is from the Journal of the Royal Society of Medicine. When asked for more details on Twitter, he provided this link, which shows a figure from Freeman et al., “Weekend hospitalization and additional risk of death: An analysis of inpatient data,” published in the Journal of the Royal Society of Medicine in 2012. The full text is available at that link, and the paper does not include Abbott’s graph, although the figure he links to appears to be the source of his data.

In fact, the authors of this paper recently provided an updated analysis using more recent numbers (2013-14), published in the BMJ under the title “Increased mortality associated with weekend hospital admission: a case for expanded seven day services?”

This analysis appears to be at the heart of the statements Hunt has been making since July about “11,000 excess deaths” annually in the UK, the alleged number of deaths above the Wednesday baseline that occur on weekends. Oh, and by the way, “weekend” now apparently includes Friday and Monday.

The subtitle of Freeman and colleagues’ analysis certainly suggests that they have found a causal link between these deaths and reduced services on weekends, yet the analysis itself does not study this link, and in the interpretation section, the authors write, “It is not possible to ascertain the extent to which these excess deaths may be preventable; to assume that they are avoidable would be rash and misleading.”

Hmmm, “rash and misleading,” kind of like the subtitle of the analysis? Oh, but they have a question mark at the end! It’s not a conclusion. They’re “just asking questions,” right? Sure, these questions suggest a conclusion and ignore the many, many other questions raised, but you can’t fault the researchers for conclusions other people draw from their innocent questions.

Seem familiar? I do believe we might be seeing the medical research equivalent of JAQing off.

Even if the difference in deaths is statistically significant and the methodology sound (which, judging from the criticism, is not a safe assumption), we don’t know if these deaths are even preventable, and the effects on the public of believing they risk death or poorer quality care on the weekends are themselves potentially harmful.

In fact, a group of physicians has started to document this, what they are calling the “Hunt effect,” the number of people who now avoid seeking care on the weekends out of fear created by Hunt’s misleading statements as well as the health outcomes for these people who delayed care. Whether they are able to demonstrate this effect as significant and conclusive remains to be seen, but it nevertheless illustrates that “just asking questions” and drawing causal conclusions based solely on correlation are potentially harmful even before any changes are implemented based on these flawed conclusions.

I personally think the deaths are worth looking into, even though it seems likely that even if there is a significant increase (still debatable), most are not preventable. But this is difficult to do when people have already made up their minds thanks to the success these politicians and scientists have had in trolling England.

Definitely a bad chart, but I think you’re being a bit unfair to the original study that seems to have inspired Hunt. I was inspired by your criticism to take a closer look at the original work, since it seemed really surprising to me that such basic errors in statistical reasoning would have made it past the reviewers at a high-quality journal like BMJ. And, in fact, Freemantle et al. seem to have been a bit more sophisticated than that, so much so that their data actually don’t even say anything like what Paul Abbot’s chart shows. I’m not really sure whether Freemantle et al. are right, but if they’re wrong, it’s for much more subtle reasons than this.

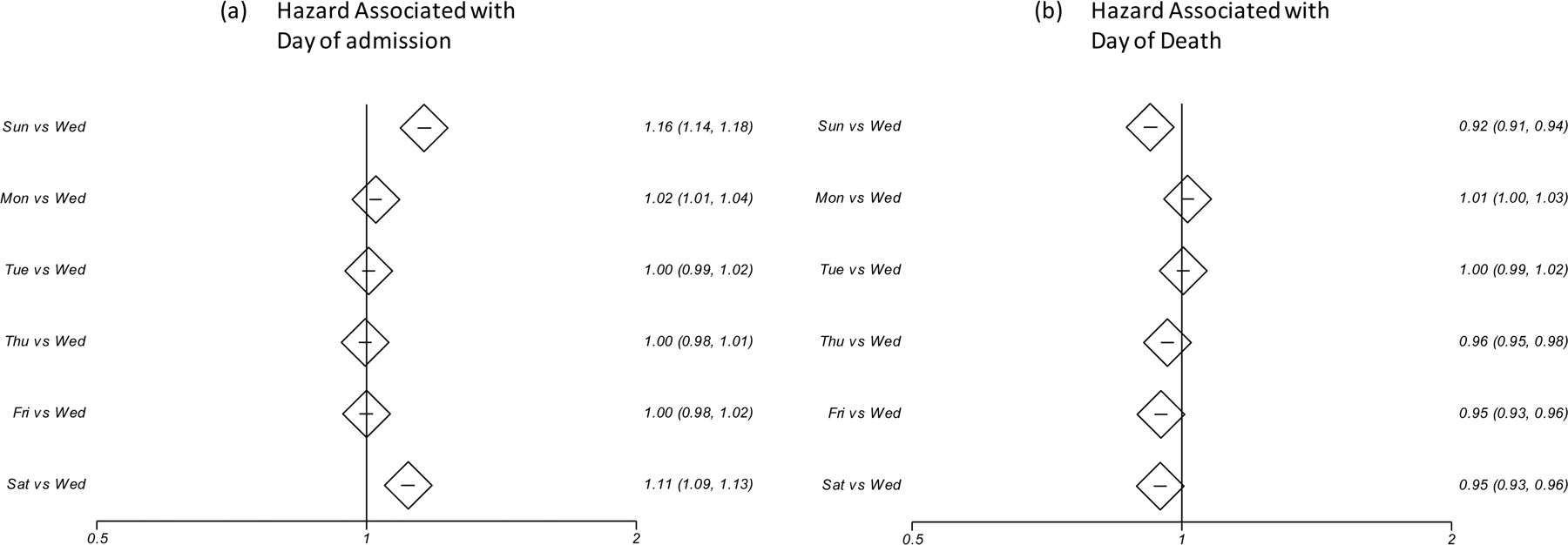

Your suggestions about various alternative explanations for the “weekend effect” are absolutely on point. So much so that many of them were explicitly included as factors in the model that Freemantle et al. developed! Basically, their approach seems to have been something like, “Let’s build a statistical model including as many factors as we can think of that could explain increased mortality for weekend admissions, and then see if there’s still anything left we can’t explain.” According to their 2012 paper (thank you for including the links to the original research, by the way! Major props for that), their model included “age; sex; ethnicity; whether or not the admission was classified as an emergency; source of admission (e.g. from home or transfer from another hospital); diagnostic group (as Clinical Classification Software Categories [CCS]); number of previous emergency admissions; number of previous complex admissions; Charlson Index of co-morbidities; social deprivation; hospital trust; day of the year (seasonality); and day of admission.” And it turns out that you’re absolutely right; their model excluding day of admission shows that patients who came to the hospital on the weekend already had the deck stacked against them and were already at greater risk of death within 30 days (Figure 1 in their 2015 paper). But, after accounting for all these factors, the “weekend effect” was still not fully explained. Pretty much everything that Freemantle et al. go on to talk about in their papers is about the portion of the “weekend effect” which is not explained by the rest of their model.

And that gets to why Paul Abbot’s chart is utterly wrong: the y axis label is not what Freemantle et al. reported (unless you’re very generous in your interpretation of “statistical risk of death”). It looks like it’s communicating “you’re 16% more likely to die if you’re admitted to the hospital on a Sunday,” but what the original analysis actually said was “for a given level of risk due to any other factors in our model, the probability of death after being admitted to the hospital on a Sunday is 16% greater.”

So really, what Freemantle et al. are arguing is something like, “We’ve tried to explain the increased mortality of patients admitted on the weekend with as many things as we can think of that might be correlated with the fact that they’ve been admitted on the weekend, and there’s still a lot of increased mortality we can’t explain. What else is different about the weekend versus a weekday? Well, one thing is that there are decreased services available on the weekend. Maybe that’s the cause. This is a pretty big effect, so let’s discuss increasing services on the weekends as a possible solution.” Now, I think their reasoning isn’t entirely sound. The most obvious thing is that maybe there are other important factors influencing patient risk which are correlated with the weekend and which Freemantle et al. didn’t think to include in their model. This is something like a distant cousin to the “I can’t imagine how the Egyptians built the pyramids, therefore aliens did it” argument. Except in this case, the idea that something in the institutional structure of hospital admissions might affect patient health isn’t, you know, stupid. The other problem I have with attributing the disparity to decreased services on the weekend is that their own data show that simply being in the hospital on the weekend doesn’t increase risk — it’s being admitted on the weekend that does. So if they’re right it should be possible to identify some way in which the admissions procedure differs on the weekend and/or a reason why reduced services only impacts newly-admitted patients. I’m inclined to think that the most likely explanation is that their model has simply failed to capture some other explanatory variables (which in the end isn’t really that different from your criticism, I suppose!), but it also doesn’t seem totally unreasonable to me that there could be something in the institutional structure of the hospital that’s contributing (like reduced weekend services), and it’s totally appropriate to encourage investigation on that front.

But to me, the most interesting part of this story is the critical responses in the BMJ, several of which you linked to. Distressingly many of them seem to have fundamentally failed to understand the statistical method being used, making what would be quite elementary errors for someone who had been educated in the topic. In their defense, if I were writing such a paper for a generalist journal like BMJ (and I should say I’m definitely not an epidemiologist or medical doctor of any type), I probably would have tried to ensure that the idea of proportional hazard was briefly summarized to help non-specialists understand the results. But, despite lacking the proper background to evaluate the work, it seems numerous physicians were perfectly happy to critique it in a professional journal without even bothering to look up what a Cox proportional hazards model is on Wikipedia. It is an interesting case study in the intersection of science, medicine, politics, and the Dunning-Kruger effect.

Anyway, as a non-Brit, I don’t really understand the political issue at stake here, except to note that I wish we had an NHS here in the US, and from everything I’ve learned watching British TV, it sounds like letting the Tories meddle with the NHS is probably a bad idea on principle.

You know, you’re absolutely right. I was unfair to the original study. I didn’t intend to be, but in retrospect, I wish I had approached the article differently.

What I was attempting to do was focus my criticism on the conclusions being drawn for political reasons by other actors rather than on the study itself, and my examples were intended as just a starting point to show how many factors are at play, so you can’t draw causal conclusions from this kind of research without deliberately investigating them. My major criticism of the researchers is in the ways they encouraged this conclusion rather than considering it to be one of many potential causes to be further investigated. This seems especially the case in the updated analysis, right in the subtitle. I didn’t intend this as a criticism of the study itself, but I can see now that my omissions give the impression that the study itself is problematic. (I think it does have some potential problems for other reasons, but not in failing to at least attempt to control variables.)

To be fair to the commenters on the updated analysis, I’m not sure that the researchers were clear that the methodology and further details were in the original paper and that they were not repeating that information in this update (although readers should have figured this out). That might be one reason so many comments seem to be misunderstanding the Cox model and raising objections that the original study actually addresses.

I do not think it’s unreasonable to look at reduced weekend services as a potential factor, but I don’t think this alone could explain the results, and it just seems sensible to assume multiple factors are at play and interacting than that just one factor is the cause until further study gives reason to think otherwise.

It’s interesting that they applied their model to a sample of U.S. hospitals as well and found similar results. I would think looking at the similarities between NHS and the US managed care hospital admissions procedures, etc., might be illuminating.

That the hazard ratio is flipped for dying in the hospital regardless of admission day could be partly explained by the importance of the immediate care one receives when first being admitted. In the 2012 study, the authors note this, but it doesn’t seem to apply in all cases:

They also acknowledge in the study that they could have limited their data to include only those who died in-hospital instead of including all deaths within 30 days of admission, whether the death occurred in-hospital or not. I can understand their reasoning for this, but I do wonder if any other out-of-hospital variables might come into play that could differ in the lives of people more likely to go in on the weekend than on a weekday.

Anyway, THANK you very much for your comments and criticisms. I really appreciate them.

My hat is off to you, and I agree completely with your criticisms of the original papers here.

In the recent 2015 paper, the title definitely suggests a stronger argument that expanded services would reduce patient deaths associated with weekend admissions than is actually made by the paper. Titling is a difficult art for scientific papers, as with any writing. In this case, I’m not sure I think the authors made the wrong decision by using a title that pushes their viewpoint, as their results at least motivate a discussion on the topic, but even so that choice is not without problems that are perfectly reasonable to criticize.

But, I think there is a really serious problem in the failure to provide adequate background to help a general medically-educated audience understand at least the basics of the model used and provide some guidance in interpreting their results. Considering that the authors evidently wished to provoke a policy discussion in the British medical community, such a background would have helped that conversation be more grounded. In their defense, high-profile, generalist journals like BMJ often have extremely restrictive word count and formatting requirements for their articles that make it difficult to include such background material (though I am not familiar with BMJ’s requirements in particular, this is a general trend). In my opinion, this is a real problem in the culture of science now: those papers which reach the broadest audience are also those least likely to contain sufficient detail to allow a broad audience to properly evaluate them. Nonetheless, as far as I was able to see, the authors made no effort whatsoever to emphasize the nature of their response metric; sometimes even a few sentences here can go a long way toward helping the reader understand, or at least helping them realize there is more relevant background that they should go read if they want to evaluate the work. I think this is an unfortunately common failing in the primary scientific literature.

Thanks for the thoughtful response! I always enjoy your work here on Skepchick.

I noticed the truncated y-axis right away, hella deceptive. I’d like to see clean data with more accurate tallies, but even if this were accurate, the trend could possibly be explained by the way people’s behavior differs on weekends: more partying means more overdoses, more impaired driving leading to more wrecks, etc.

In the US, I would also expect the increased rate of car accidents, bar fights and random shootings on weekends as a factor. (The UK is reputed to be more civilized)

Also, the basic variable, “death rate”, isn’t defined. Is it deaths per unit population, or deaths per unit hospital admission? How do they count people who are admitted on Wednesdays but die the next Saturday? How do they count people with chronic fatal illnesses (such as terminal cancer?) Are they admitted when they suffer a crisis, and die at a rate independent of the day of admission, or do they share in the weekend peak? They might even show a reverse cycle, because if they are doing fine on Friday, but suffer a sudden downturn on Saturday or Sunday, they might postpone admission to Monday, causing a peak early in the week.

The oddest bit is that the Tory’s solution to the problem is to through money at it. I’m sure the US Republicans would say it proves Obamacare doesn’t work and vote to defund it completely, and also Benghazi.

“throw money”, not “through money” :-)

The real measure, from the original work, is “relative hazard,” which is basically the probability of death given various factors (your condition, age, sex, hospital reputation, etc.) and given that you were admitted on a particular day of the week, divided by the probability of death given those same factors and that you were admitted on a Wednesday. Basically, they use a statistical model that lets them apportion out the total risk of death to a whole bunch of factors, including day of hospital admission, and assign a “relative hazard” number to each of these. The risk for a particular patient is something like the product of her relative hazard for all factors.

The death rate in question is the proportion of patients who died within 30 days after being admitted to the hospital. The original work ran a model looking at day of death instead of day of admission and found no significant effect (i.e., after you control for other factors, you’re no more likely to die on a weekend in the hospital than during the week; only if you’re admitted on the weekend). The patient’s illness was included as an explicit factor in the model; evidently the nature of the disease doesn’t seem to influence the “weekend effect” much, and they showed explicitly that cancer and cardiovascular patients had a similar pattern. Interestingly, it does seem that weekend-admitted patients are “sicker,” but this isn’t enough to fully account for the increased death rate in their model.

I’m still suspicious that this is some backdoor means for the Tories to cut NHS funding in some way, but I don’t know enough about British politics to have a good sense if that’s true.